The challenge of scaling cloud service expertise

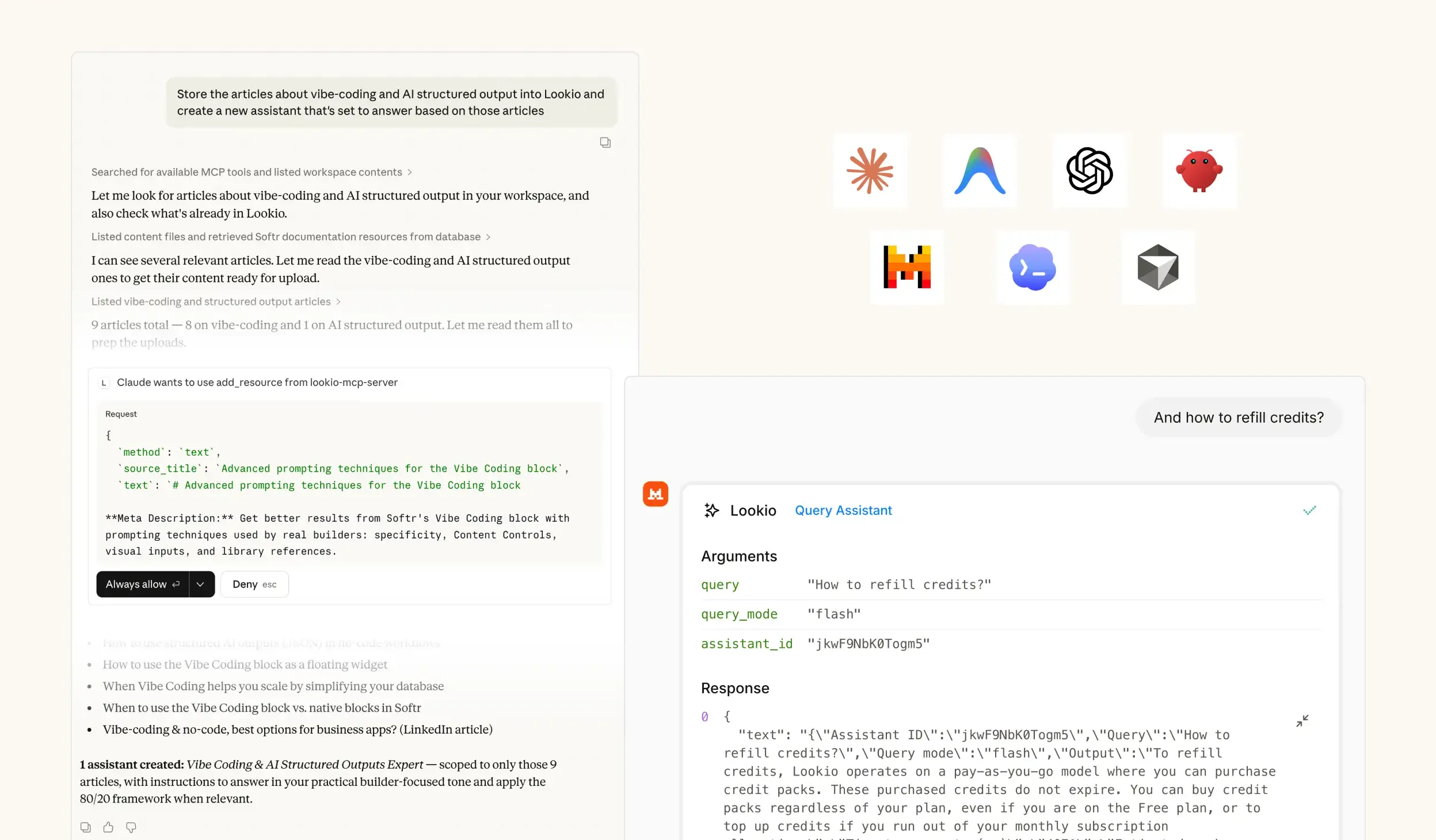

When a Cloud Architect or Solution Engineer tries to support a growing client base, they hit a persistent information wall. In the cloud sector, technical documentation is vast, multi-layered, and constantly evolving. Your team is likely buried in AWS whitepapers, Azure reference architectures, and internal deployment logs, yet finding a specific configuration detail still takes 15 minutes of manual searching.

The high cost of expert interruptions

What breaks in this workflow isn't just speed; it's the burnout of your top talent. Every time a junior support agent or a sales lead interrupts a senior architect to verify a compliance detail or a networking limit, you are losing high-value billable time. This creates a bottleneck where knowledge lives in the heads of a few experts rather than being accessible across the organization. As you scale, this dependency leads to SLA risks and inconsistent advice that can jeopardize client infrastructure.

Why standard tools fall short

Cloud Service Providers (CSPs) have likely attempted several stopgap solutions, each with significant flaws:

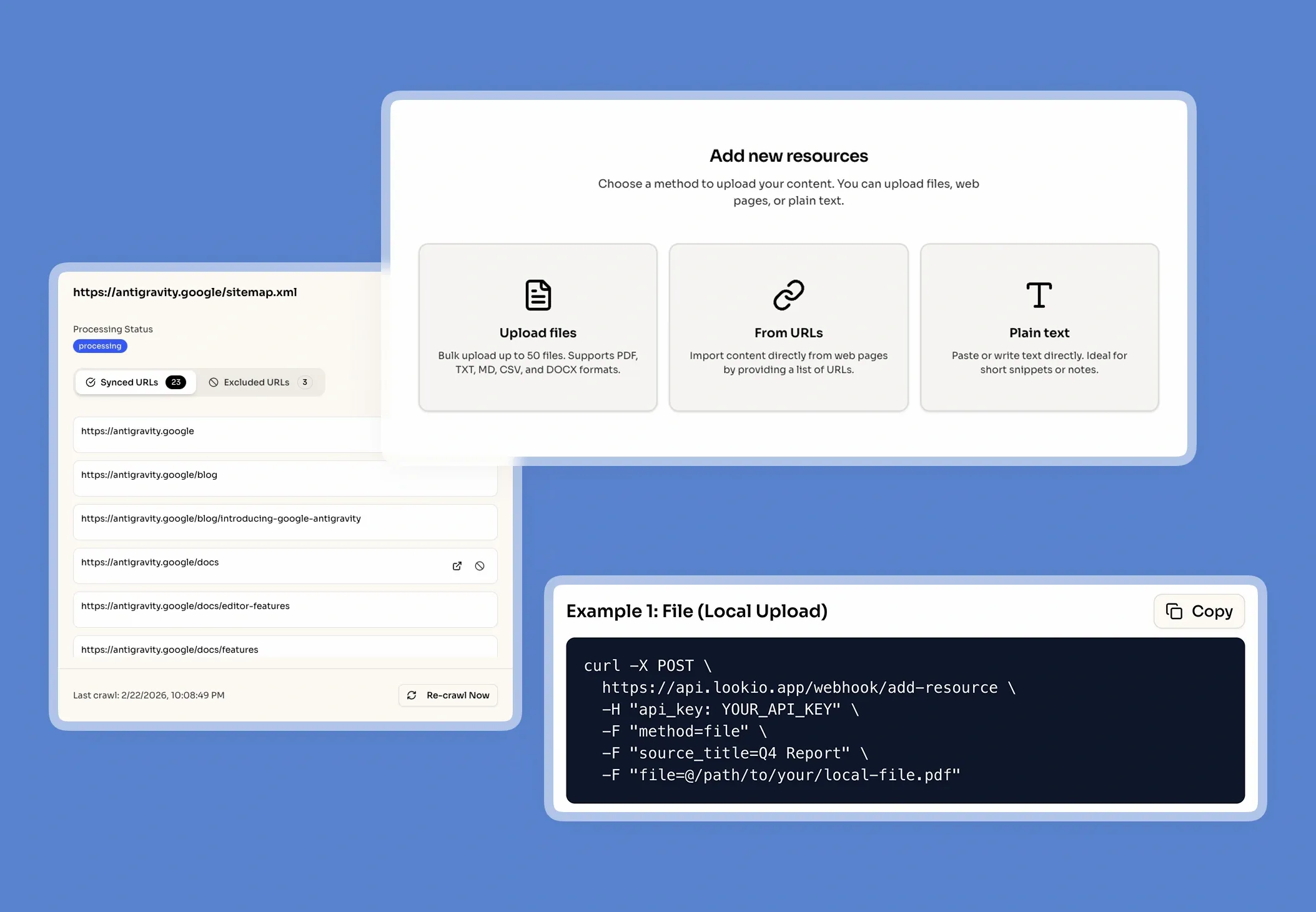

- Internal Wikis and Confluence: These systems rely on keyword matching. If an engineer searches for "isolated subnets" but the doc uses the term "private enclaves," the search fails. They don't scale with the complexity of cloud jargon.

- Generic AI (ChatGPT): While impressive, generic models often hallucinate on specific technical limits or outdated document versions. In cloud services, being 90% right is 100% wrong—one wrong CLI command can bring down a production environment.

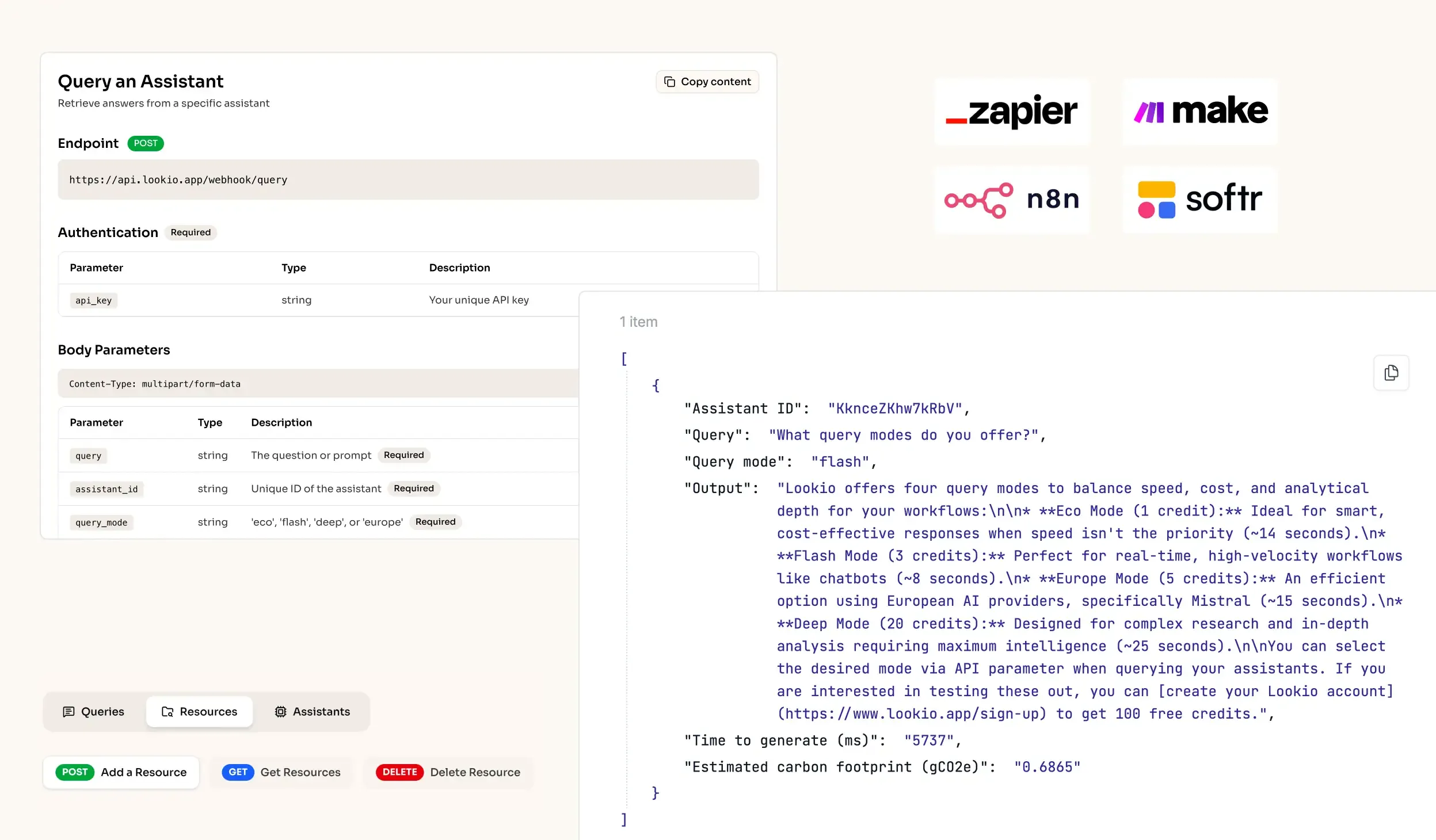

- NotebookLM and Custom GPTs: These are limited for enterprise use because they lack an API. You cannot trigger them from your ticketing system or automate responses in Slack, forcing your team back into manual copy-pasting.

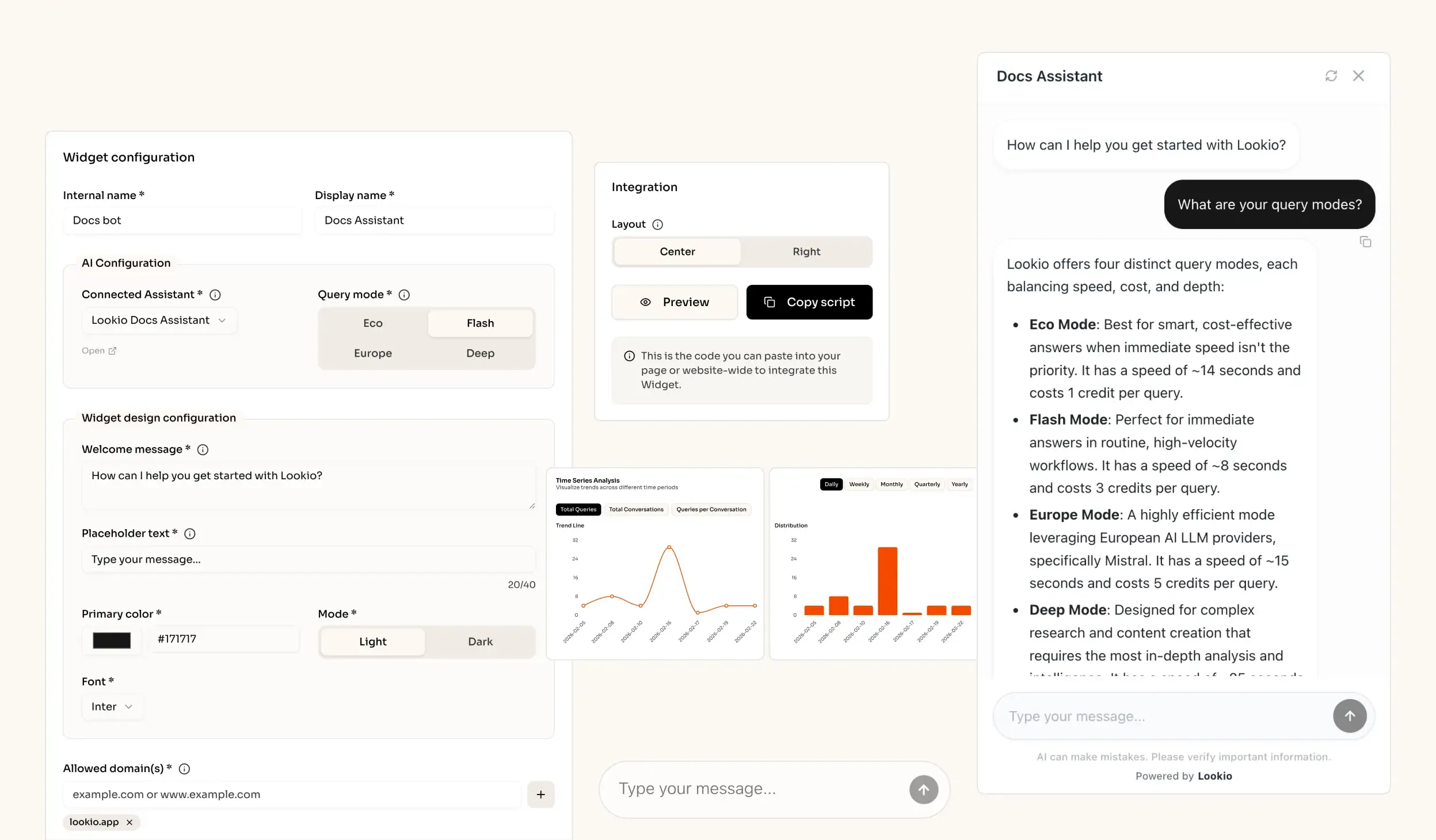

What’s missing is a programmatic way to turn your proprietary technical stack into a verified source of truth that agents can query instantly.